Artificial Intelligence offers transformative potential, but its rapid adoption in the business world has outpaced a critical understanding of its risks. Deploying AI without a clear strategy for mitigating its dangers is not just negligent; it's a direct threat to your company's security, reputation, and bottom line. This article outlines the three most significant dangers and how to protect against them.

Danger 1: Intellectual Property and Data Security Leaks

The most immediate and widespread danger of AI use comes from a fundamental misunderstanding of how many AI models work. Generative AI learns from vast datasets, including the prompts and files users upload. When your employees use free, public AI tools, they could be inadvertently feeding your company's most sensitive information into a global database.

So, what's the harm? Imagine an employee uploads a confidential business plan or a draft for a new patent to a public AI to "proofread it." The AI learns from this data. Weeks later, a competitor, researching a similar market, asks the AI for business plan ideas. The AI, now trained on your proprietary strategy, provides your competitor with a detailed, well-structured plan based on your own intellectual property. You have just armed your competition with your best ideas.

This is not a hypothetical scenario. Unless explicitly disabled, this is the default behavior for many AI services. The only true defense is a combination of enterprise-grade, private AI tools and, most importantly, a workforce trained to understand that their prompts are not private conversations. They must know how to disable model training in every tool they use.

Danger 2: AI Catastrophes from "Hallucinations"

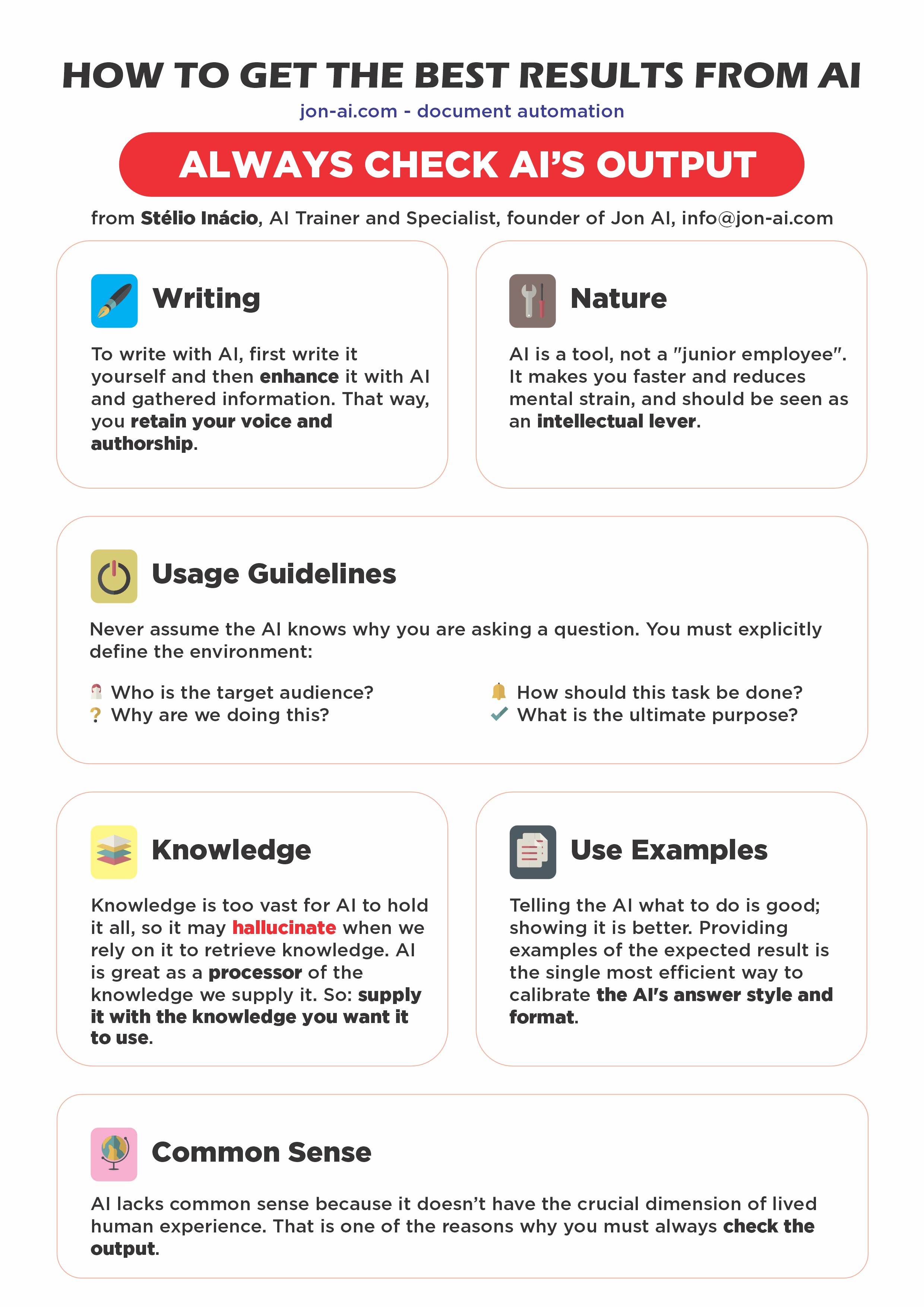

AI models are not search engines; they are prediction engines. They are designed to generate plausible text, not to state objective truths. When an AI doesn't know an answer, it will often "hallucinate"—a polite term for "make things up." Because the fabricated output is usually well-written and confident, it is dangerously easy to believe.

These hallucinations have led to real-world catastrophes:

- Legal Malpractice: Lawyers acting for a client against Avianca Airlines used an AI for legal research. It invented fake legal precedents, which the lawyers submitted to federal court. The case was dismissed, and the lawyers were fined and publicly shamed for gross negligence.

- Financial Misinformation: The tech news site CNET used an AI to write dozens of financial articles. The articles contained basic mathematical errors and plagiarized content, forcing CNET to issue humiliating public corrections and severely damaging its credibility.

- Binding False Promises: Air Canada was forced by a court to honor a refund policy its own chatbot had invented, setting a legal precedent that companies are liable for the promises their AIs make, true or not.

A single unverified "fact" from an AI can lead to disastrous business decisions, legal liability, and irreversible reputational harm.

Danger 3: Erosion of Quality and Brand Reputation

Beyond discrete catastrophes, a subtle but corrosive danger of untrained AI use is the gradual erosion of quality. When employees rely on AI as a crutch rather than a tool, the quality of their output can decline. The results are often generic, robotic, and lacking in human insight.

Gannett, one of the largest newspaper publishers in the US, learned this the hard way when it used an AI to write high school sports summaries. The articles were so comically bad—using phrases like "athletic-type encounter"—that they became a national laughingstock. The company alienated local readers and had to halt the program, suffering a major blow to its journalistic reputation.

If your company's reports, marketing copy, or client communications start to feel lifeless and generic, you are on the path to brand irrelevance. AI should augment human expertise, not replace it.

The Solution: A Human Firewall

The common thread in all these dangers is human error—specifically, a lack of understanding of the technology. The most effective defense against the dangers of AI is a "human firewall": a workforce that is educated, aware, and empowered to use AI safely and effectively.

Our comprehensive training program is designed to build this firewall. We teach your employees:

- The non-negotiable rules of AI data security.

- How to spot and verify potential AI hallucinations.

- A framework for using AI to enhance quality, not dilute it.

- The specific use cases where AI is strong and where it must be avoided.

Don't wait for an AI-induced catastrophe to take these dangers seriously. The time to train your team is now.

Ready to protect your company and turn AI risk into a competitive advantage?